Overview

Evaluating your results will help the team understand Manifest’s impact on your operations and help inform its potential impact from a broader deployment within your organization. Collecting metrics that support your objectives and that can be compared to a baseline using traditional (pre-Manifest) methods is essential.

The following provides tips and tricks for performing a Manifest evaluation.

Prerequisites

- Well established baseline to which to compare Manifest usage so that you fully understand the impact to your operations. A good evaluation acts as an A/B test so being able to do side-by-side comparisons and evaluation is the key to success

- Current state and current process should be documented

- Workforce’s effectiveness or typical results should be documented

- Metrics of success should map to how things are measured today

- At least one authored procedure in Manifest to compare to baseline procedure

- Asset tag printed and placed on equipment

- Equipment scheduled or available

- Testing resources scheduled or available

Preliminary Evaluation

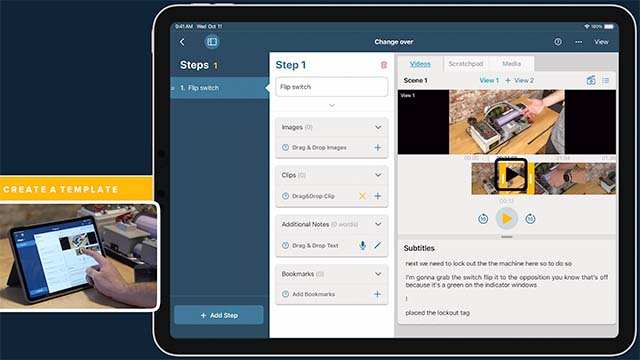

Before conducting a formal test and evaluation, it’s recommended to have resources familiar with the project and subject matter review the authored content in Manifest. This allows a preliminary review of content captured in Manifest and an early evaluation of how it compares to the current baseline. Improvements may be identified before formal testing occurs.

This allows SME’s the opportunity to optimize the authored content in Manifest for maximum impact.

Upskill Test Group – Device Familiarization & Manifest 101

If using HoloLens 2, it’s recommended to train testers on how to use the hardware.

Device Familiarization (recommended 15 mins)

- From the device, visit the Tips application to practice using your hands and voice for interacting with holograms and mixed reality

- Next, play a quick game of RoboRaid

Manifest 101 (recommended 10 mins)

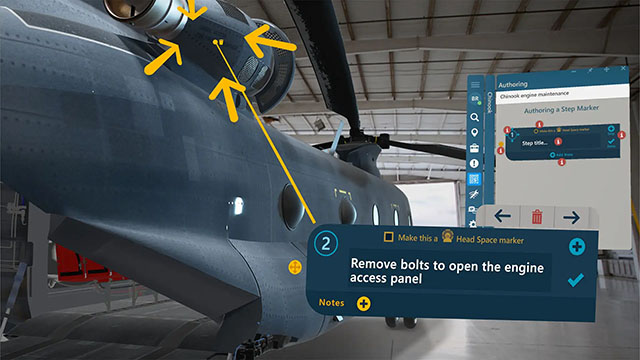

Once test group is skilled on how to operate the device itself, get familiar with Manifest by following the Guided Tour within the application

Once the test group has completed this exercise, you’re ready to conduct a formal evaluation.

Perform Test

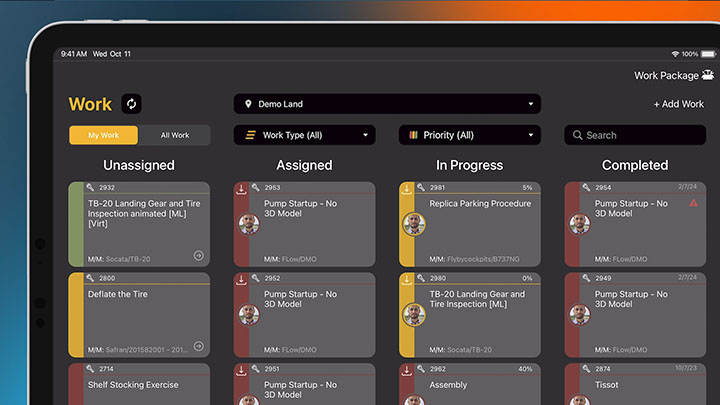

Gather quantitative data by performing A/B tests where testers perform a task using current state baseline then using Manifest. Be thoughtful and intentional about the data collection process. Again, collecting metrics that support your objectives and that can be compared to a baseline using traditional (pre-Manifest) methods is essential.

Qualitative measure may add to your evaluation and understanding. User surveys are a great way to obtain insights that complement the numbers.

- Did users find it easy to use?

- Did they learn some tricks and tips that would be worth collecting for others?

- What are their recommendations to help others learn and use Manifest?

Capturing these insights will be valuable as you move to deploy Manifest broadly into your operations and expand usage. Be sure to include some observations about the repeatability of Manifest’s impact or suggestions on how to scale the solution within your organization based on observation.

Sample Qualitative & Quantitative Metrics

Should you need guidance or an idea of metrics to which to measure Manifest, please find a list of areas we’ve seen clients have success:

- Increase operational efficiency in Operations, Maintenance, and Inspection

- Increase availability rate or operational rate of machine

- Show reduction of number of support calls because of a problem

- Increase time to productivity

- First time fix or completion (completing task on first attempt)

- Job completion time

- Increase product quality

- Reduce errors

- Reduce troubleshooting and time to resolution

- Use Manifest Connect for “see what I see” troubleshooting

- Employ fault flag and resolution to quickly identify and resolve issues

- Enable remote work and assistance

- Enable remote assessment

- Real time review of completed work

- Review of evidence

- Decrease training and onboarding times